Collecting and crunching data in real-time

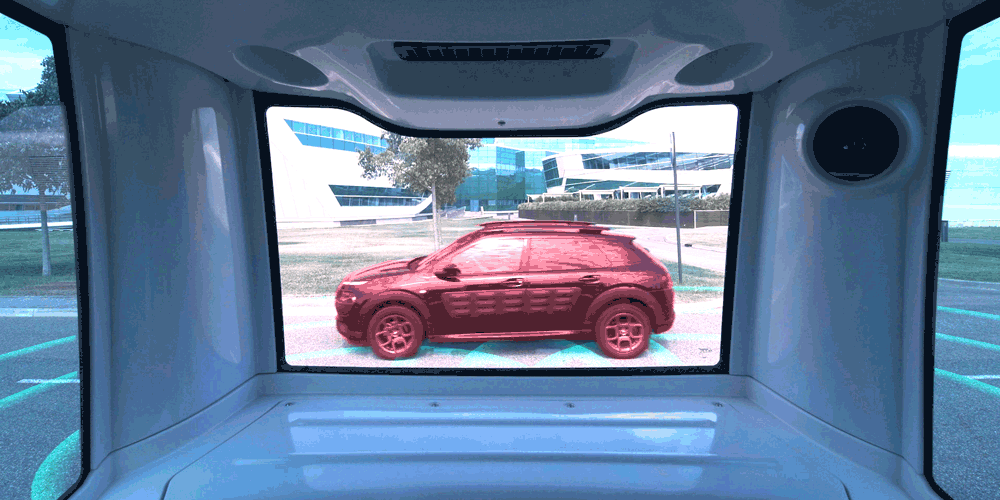

Autonomous vehicles require a high level of information to operate safely and so are equipped with a full range of sensors.

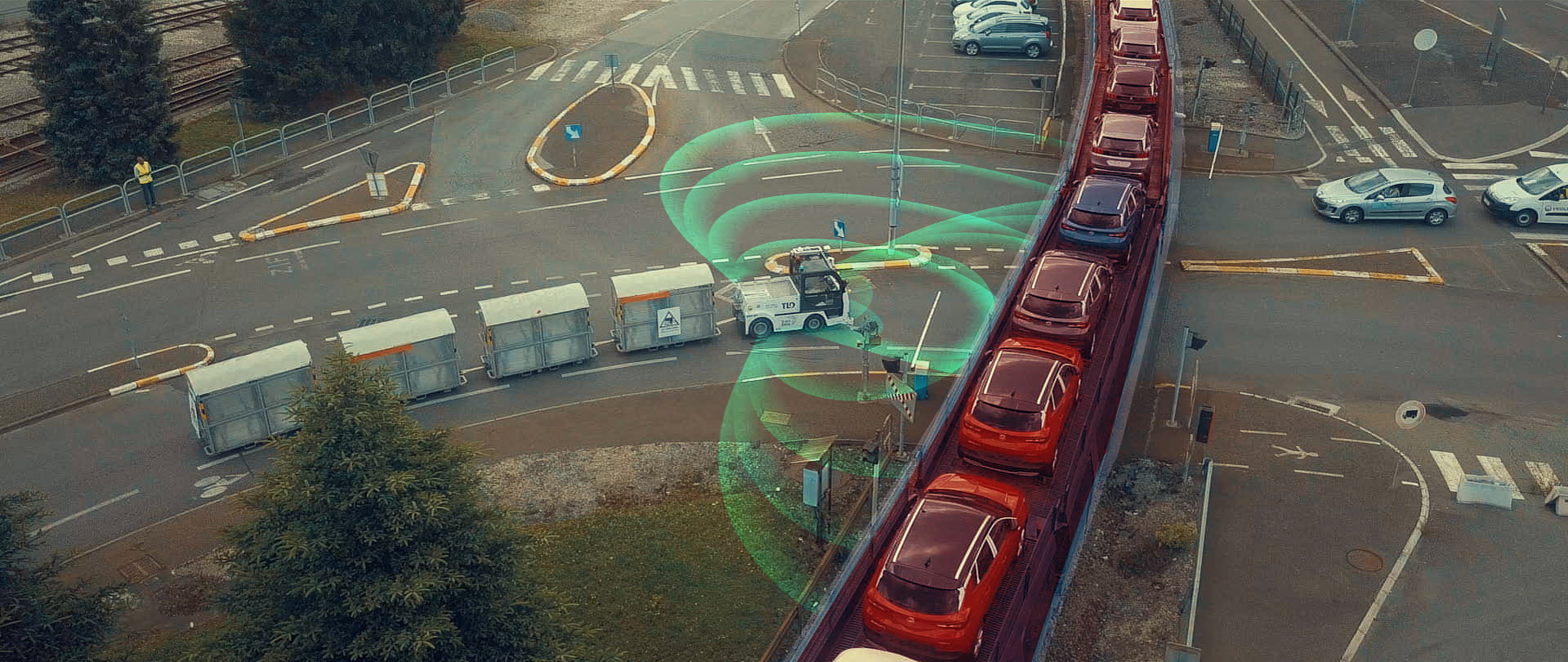

These sensors collect and crunch recorded data to create a 360-degree picture of the environment, including infrastructure, other vehicles, pedestrians, and anything else in the path.

Real-time processing of the data allows the driverless vehicle system to decide how to behave to progress safely along the road (stop, go, or slow down etc).

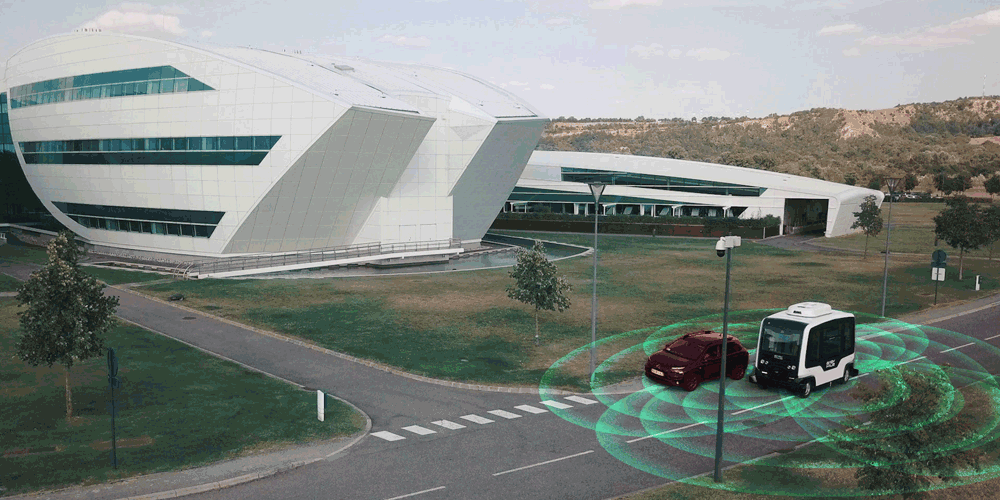

EasyMile’s unique technology allows autonomous vehicles to drive safely with cars, bikes, pedestrians and so on. They understand the environment as it is. No need to build or make anything extra!